- Blog

- Best Note Taking App For Mac On Powerpoint

- Dell P713w Printer Driver For Mac 10.12

- Psps Emulator Mac

- Download.com Video Editor For Mac

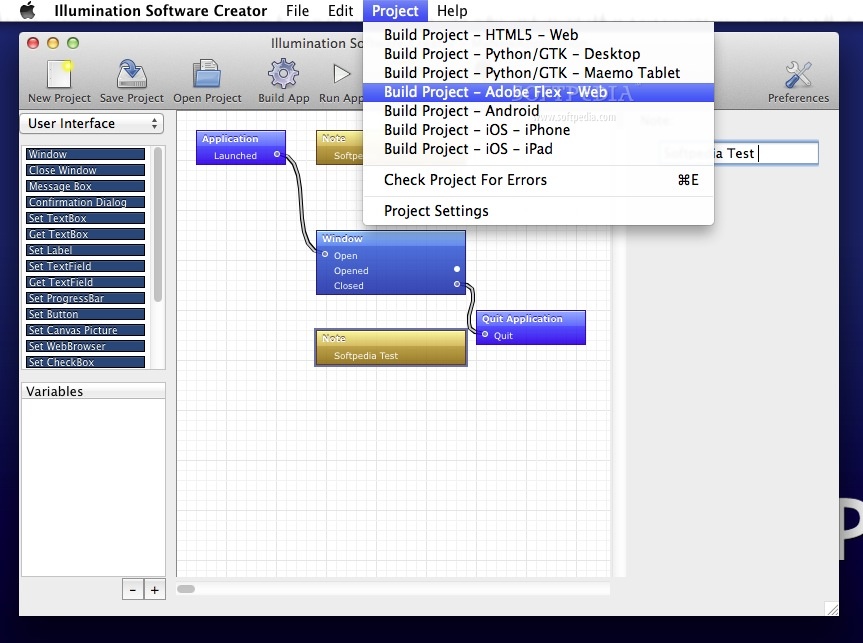

- How To Build A Package For Os X

- What Makese Sense For Xamarin Development - Windows Or Mac?

- Screen Printing Software For Mac

- Official Release Date For Visual Studio For Mac

- Best Mac Foundation For Olive Skin Tone

- Stb Emulator Mac Id Reset

- Free Minecraft Demo For Mac

- Where To Find Rane 62 Driver For Mac

- Quicktime Player For Mac Editing

- Begin Again Video Download For Mac

- Video Grabby For Mac

- Create Windows Bootable Usb On Windows For Mac

- Current Mac Os X Market Shares 2017

- Best Fps Boosting Clients For Minecraft 1.8.9 Fora Mac

- App For Mac Personal Assistant

- Visio 2016 Professional For Mac

- Hp Scanning App For Mac Os

- Windows 7 For Mac Buy Online

- Staples Quickbooks For Mac

- Flash Player For Mac For Chrome

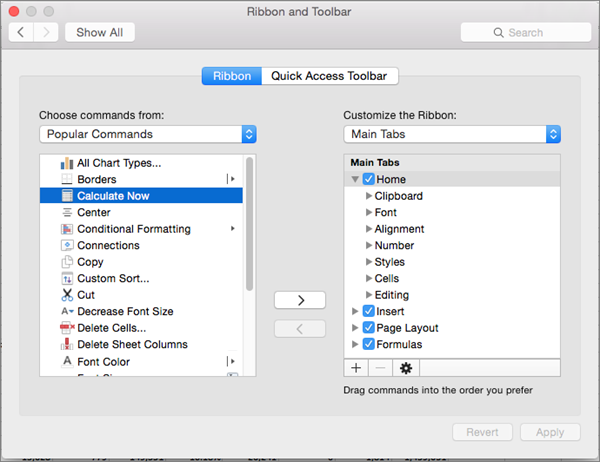

- How To Find Duplicates In Excel For Mac 2008

- Partitioning An External Hard Drive For Mac And Windows

- Samson Meteor Driver For Mac

- Samsung S8 Software For Mac

- Microsoft Visual Studio Enterprise 2017 For Mac

- Best Gaming Mouse For Mac 2017

- Adobe Muse Cc 2015 Mac Os X

- Brother P Touch Label Maker 2710 Driver Download For Mac

- Easy Free Photoshop For Glamour For Mac

- Opensource Gis Viewer For Mac

- Online jupyter notebook online editor

- Katekyo hitman reborn fanfiction tsuna abandoned

- G clip plug-in

- Imovie 10-0-5 remove zoom

- Just cause 2 mods spiderman

- Plotter seiki sk720t

- Wrestlemania 2018 match by match live blog

- Propellerhead recycle kickass

- Focusrite saffire driver

- Syncios free download

- Civilization revolution 2 vita review

- Fightcade jojo rom download

|

Microsoft excel 2017 free download - Microsoft Excel, Microsoft Excel, Microsoft Office Excel 2010, and many more programs. View all Mac apps. Popular iOS Apps. Business Software Developer.

The Windows desktop platform has long been the most popular platform among C application developers. With C and Visual Studio, you use Windows SDKs to target many versions of Windows ranging from Windows XP to Windows 10, which is well over a billion devices.

Popular desktop applications like Microsoft Office, Adobe Creative Suite, and Google Chrome all are built using the same Win32 APIs that serve as the foundation for Windows desktop development. Developing for Windows desktop allows you to reach the highest number of users on any non-mobile development platform. In this post we will dive into the “Desktop development with C” workload inside Visual Studio and go over the workflow used to develop a desktop app.

For information on developing Universal Windows Platform apps,. Acquiring the tools After installing Visual Studio, open the Visual Studio Installer from the Start menu and navigate to the Workloads Page.

We need to install the “Desktop development with C” workload, which provides the tools needed for developing Windows desktop applications that can run on Windows. The Win32 API model used in these types of applications is the development model used in Windows versions prior to the introduction of the modern Windows API that began with Windows 8.

This modern API later evolved into the, but traditional desktop development using Windows APIs is still fully supported on all versions of Windows. When you install the C Windows desktop workload, you have many options to customize the installation by selecting your desired tools, Windows SDKs, and other additional features like recent ISO C standards candidates such modules support for the STL. The core C compiler and libraries for building desktop applications that target x86 and x64 systems are included in the VC 2017 v141 toolset (x86, x64). Notable optional tools include support for MFC and C/CLI development. In the following examples, we will show how to create an MFC app, so this optional component was installed.

Opening code and building After installing the C desktop workload, you can begin coding in existing projects or you can create new ones. Out of the box, Visual Studio can open any folder of code and be configured to build using CMake, a cross-platform build system. The Visual Studio CMake integration even allows you to use another compiler by opening the directory containing your CMakeLists.txt files and let VS do the rest. Of course,there is also full support for Microsoft’s own build system called, which uses the.vcxproj file format. MSBuild is a robust and fully featured build system that allows building projects in Visual Studio that target Windows. Building an MSBuild-based project just requires a.vcxproj file and can be built in the IDE.

In Visual Studio 2017, you can also simply open a folder of code files and immediately begin working in it. In the background, Visual Studio will index your files and providing Intellisense support along with refactoring and all the other navigation aids that you expect. You can create custom.json scripts to specify build configurations.

Creating new projects If you are creating a new project from scratch, then you can start with one of a variety of project templates.: Each template provides customizable build configurations and boilerplate code that compiles and runs out of the box: Project Type (folder) Description Win32 The (also known as the Windows API) is a C-based framework for creating GUI-based Windows desktop applications that have a message loop and react to Windows messages and commands. A Win32 console application has no GUI by default and runs in a console window from the command line. ATL The is a set of template-based C classes that let you create small, fast Component Object Model (COM) objects. COM MFC is an object oriented wrapper over the Win32 API that provides designers and extensive code-generation support for creating a native UI. CLR (Common Language Interface) enables efficient communication between native C code and.NET code written in languages such as C# or Visual Basic.

Project templates are included for each of these types of desktop applications depending on the features you select for the workload. Project Wizard Once you have selected a template, you have the option to customize the project you have selected to create. Each of these project types has a wizard to help you create and customize your new project. The illustrations below show the wizard for an MFC application.

The wizard creates and opens a new project for you and your project files will show up in Solution Explorer. At this point, even before you write a single line of code, you can build and run the application by pressing F5. Editing code and navigating Visual Studio provides many features that help you to code correctly and more efficiently. Whether it be the powerful predictive capabilities provided by IntelliSense or the fluid navigation found in Navigate To there is a feature to make almost any action faster inside Visual Studio. Let Visual Studio do the work for you with autocompletion simply by pressing Tab on the item you want to add from the member list.

You can also hover over any variable, function, or other code symbol and get information about that symbol using the quick info feature. There are also many great code navigation features to make dealing with large code bases much more productive, including Go To Definition, Go To Line/Symbols/Members/Types, Find All References, View Call Hierarchy, Object Browser, and more. Peek Definition allows you to view the code that defines the selected variable without even having to open another file which minimizes context switching. We also have support for some of the more common refactoring techniques like rename and extract function that allow you to keep your code looking nice and consistent.

Debugging and Diagnostics Debugging applications is what Visual Studio is famous for! With a world-class debugging experience that provides a plethora of tools for any type of app, no tool is better suited to debugging applications that target the Windows desktop platform.

Powerful features like advanced breakpoints, custom data visualization, and debug-time profiling enable you to have full control of your app’s execution and pinpoint even the hardest to find bugs. View data values from your code with debugger data tips. Take memory snapshots and diff them to reveal potential memory leaks, and even invoke on your app from inside Visual Studio to help solve the notoriously hard problem of memory corruption. Track live CPU and memory usage while your application runs and monitor performance in real-time. Testing your code Unit testing is a very popular way of improving code quality, and test-driven-development is fully supported inside Visual Studio. Create new tests and for easy tracking and execution of tests. And can help find problems as they arise instead of later on when things are harder to isolate.

Visual Studio allows both native and managed test project templates for testing native code which can be found in the Visual C/ Test section of the new project templates. Note that the native test template comes with the C desktop workload and the managed test comes with the.NET desktop workload. Working with others Besides all of the individual developer activities that Visual Studio makes more productive, collaboration is also something that is directly integrated into the IDE. Visual Studio Team Services is a suite of features that optimize the team collaboration process for software development organizations. Create work items, track progress, and manage your bug and open issue database all from inside Visual Studio. Git is fully supported and works seamlessly with the Team Explorer, allowing for easy management of branches, commits, and pull requests.

Simply, then you can track the source code of your desktop applications into Visual Studio Team Services. Visual Studio Team Services also simplifies continuous integrations for your desktop applications. Create and manage build processes that automatically compile and test your apps in the cloud. Windows Store packaging for desktop apps When you are ready to deploy your desktop application, you would typically build an executable (.exe) and possibly some libraries so that your application can run on a Windows device. This allows you to easily distribute your application however you like, for example via a download from your website or even through a third-party sales platform such as Steam. A new option for Windows desktop apps is to be available in the Windows Store with all the advantages that entails.

The allows you to package and distribute your Win32 application through the Windows Store alongside. When targeting Windows 10, this can provide advantages including streamlined deployment, greater reach, simpler monetization, simplified setup authoring, and differential updates. Try out Visual Studio 2017 for desktop development with C!, try it out and share your feedback. For problems, let us know via the option in the upper right corner of the VS title bar. Track your feedback on the. For suggestions, let us know through. Where is C/CX in the list?

Where is C/WinRT? Here is what things look like to me: We have this newly built WinRT interface, all shiny in object orientation and allegedly superior to the C API of Win32. WinRT is written in C, yet there is no support, virtually no documentation, and subpar-to-nonexistent tooling to call it via its own native language – C.

This situation is truly pathetic. It has never been a worse time to be a C developer. Microsoft has its head thoroughly embedded up its own fundament and has failed on nearly every C promise they made. A new Visual Studio installer!

OOOOOooooo shiny.

Streaming is available in most browsers, and in the WWDC app. VR with Metal 2 Metal 2 provides powerful and specialized support for Virtual Reality (VR) rendering and external GPUs. Get details about adopting these emerging technologies within your Metal 2-based apps and games on macOS High Sierra.

Walk through integrating Metal 2 with the SteamVR SDK and learn about efficiently rendering to a VR headset. Understand how external GPUs take macOS graphics to a whole new level and see how to prepare your apps to take advantage of their full potential. WWDC 2017 - Session 603 - macOS Resources. Download Crowd Sounds Good morning, everyone. Welcome to VR with Metal 2. My name is Rav Dhiraj, and I'm a member of the GPU Software Team at Apple. So, as you saw in our Introducing Metal 2 Session, we've enabled some great new features this year.

And in this session, I'll be focusing specifically on the support for VR that we're adding with Metal 2. So, I'll start with a brief summary of what we've enabled in macOS High Sierra. And then, take a deep dive into what's required to build a VR app. And then, end by providing some details on how you can take advantage of the new external GPU support that we've added to the OS. I hope everyone here knows what virtual reality is. But just in case, it's am immersive 360-degree 3D experience, with direct object manipulation using controllera. And an interactive room sized environment that you can explore, thanks to highly accurate motion tracking.

Now, we at Apple think that this is a great new medium for developers like you to create new experiences for our users. And Metal 2 enables this support in three ways. First, by providing a fast path to present the frame directly to the VR headset with our new Direct to Display capability. Second, by enabling new features like Viewport Array that are specifically targeting VR. And finally, by providing that foundational support for external GPUs, so that developers have a broader range of Mac hardware. Of VR capable Mac hardware to work on. So, let's get right into what we've enabled with macOS High Sierra.

So, we've added built-in plug and play support for the HTC Vive VR headset. And this headset works in conjunction with Valve SteamVR runtime, which provides a number of services, including the VR compositor.

Valve is also bringing their open VR APIs to macOS, so that you guys can create VR apps that work with SteamVR. We've been working really closely with Valve over the last year to align our releases, and both SteamVR and OpenVR are available to download in beta form, this week. So, before I go on, I'd like to describe what the VR compositor actually does. So, in a nutshell, it distorts the image that your app renders to account for the lens optics in the VR headset. In this example, you can see the barrel distortion that the compositor is applying to account for the pincushion effect of the lenses. Now, in practice, there's actually a lot more complexity under the covers.

And the compositor had to handle things like chromatic aberration, and also presenting a Chaperone UI, so that developers know the bounds of their VR space. Now, that you have built-in support for a VR headset, a VR compositor with SteamVR, and an API to write to, let's dive right into how you build a VR app. Well, we have two options. You first, is to use an existing game engine that has VR support. This is a great option that many developers prefer, because it hides some of the complexities of the VR compositor. It also provides a familiar content creation tool chain.

Now, your second option, is to write a native VR app that calls open VR, directly. This gives your app full control of rendering and synchronization with the VR compositor. But at the cost of some additional complexity. Which path you take depends on the goals of your app. Let's start by talking a bit about the game engines. So, you saw Epic's Unreal Engine 4 in action in the keynote, and it's a powerful platform on which to build your VR experiences.

VR support will be coming later, this year, and you can find tutorials and other information on Epic's website. We're also really excited that Unity is bringing VR support to macOS in a future release of their engine. We've been working closely with them to ensure that the engine is optimized for both VR playback and development using Metal. So, I'd like to take a moment, at this point, to talk about a specific Unity title called Space Pirate Trainer. So, we've been collaborating with Unity and I-Illusions to bring an early preview of Space Pirate Trainer to macOS. And the speed at with I-Illusion was able to bring their app to our platform was truly astounding. They had a working build in just a few hours, then a stable fully playable game in just a handful of days.

We've been having tremendous fun playing with this game, and think that it's a great representation of the type of VR experience that you can build with unity. We hope you get a chance to check it out at WWDC. We think you'll love it, as well. So, Unity and Unreal Engine 4 are two great options for VR development. But you can, of course, choose to write a native SteamVR app, that calls the open VR framework, directly.

Now, we'll have details on how you can add the framework to your app, later in the session. But you can download binaries and API documentation at the OpenVR GitHub. There'll also be a Metal specific sample app available to download in the near future.

But to give you a taste of what's involved, I'd like to now, provide a primer on VR app development in a segment that I like to call VR App Building 101. So, we're going to cover a few things, here. We'll start with an overview of some of the challenges involved in VR development. And then, talk a bit about some unique considerations on our platform. And then, take a deep dive into the anatomy of a VR frame. And then, end with some best practices specific to VR apps.

So, we've got a lot to cover, here. So, let's get started with the overview.

So, a traditional non-VR work load targeting a 60Hz display has around 16.7 milliseconds to do work for the frame. And in many cases, the app has the entire frame budget to do work on the GPU. A VR workload, on the other hand, has to target a 90 frame per second display. And it needs to hit this target to achieve a smooth stutter-free experience on a headset like the Vive.

So, this reduces the available frame budget to around 11 milliseconds. Additionally, the VR compositor has to do work on the GPU to prepare the frame for the VR headset. This can take up to 1 millisecond, leaving you app an effective frame time budget of around 10 milliseconds. Which is about 60% of the non-VR case. And if that wasn't enough, your app also has to do more work every frame. This includes stereo rendering for the left and right eye. And also, rendering to a higher resolution, in many cases.

The Vive headset has a resolution of 2160 x 1200. That's 25% more pixels than a 1080 display. Additionally, many VR apps render at a 1.2 to 1.4 X scale factor to further improve quality. So, in summary, your app has a lot more work to do in less time. Welcome to VR development. Let's talk about some platform specifics. So, Metal 2 introduces a new Direct to Display capability for supported headsets like the Vive.

This is a low latency path that bypasses the OS window compositor, and gives the VR compositor, like SteamVR, the direct ability to present a surface onto the VR headset. This avoids any pixel processing or additional copies by the OS. Now, it's worth noting that in order to guarantee this fast path, that macOS does not treat VR headsets as displays. They're hidden from the system and offer no extended desktop capabilities. So, to summarize, we move from a model where you can present or an app presents to a display through the OS window compositor. To one where a VR app can present to a headset directly through the VR compositor. And that's our Direct to Display capability for VR with Metal 2.

Keeping with the theme of macOS platform specifics, let's talk a bit about how your app selects a Metal device to use. So, in macOS, the VR compositor can query the OS to find the Metal device for the GPU that the headset is attached to.

And for performance reasons, your app will want to select the same device that the compositor is using. So, we've worked with Valve to make sure that there's an API to do this, simply called GetOutputDevice to get the Metal device that you should render to. It's that simple. Now, let's talk about managing drawable surfaces on macOS. So, the VR compositor and your app each maintain separate pools of drawable surfaces. And in a typical frame your app will render to textures that it owns, submit these to the VR compositor. These will get composited onto a surface that the compositor owns, and that's the surface that'll get presented to the headset.

And on macOS, IO surfaces are ideal for transferring this rendered data from your app to the compositor. So, make sure that you create Metal textures that are backed by IO surfaces. As a refresher, let's take a quick look at how you create those textures.

So, you'll want to build a texture descriptor that specifies the Render Target Usage flag, since your app will be rendering into it. But also, the Shader Read Usage flag.

Since the compositor will be sourcing it as an input. And then, to create your left and right eye Textures, you simply pass IO surfaces that you previously allocated and this Texture descriptor to newTextureWithDescriptor. I'd like to now, take a few minutes to talk or to describe a typical frame in a VR app. This is important, because your app and the VR compositor need to work in lock step. As I noted before, the rendered output of your app will be passed to the VR compositor for additional processing on the GPU.

And since the GPU is a shared resource, synchronization and when work is scheduled matters. So, let's start at the beginning of the frame.

Your app will need to query the VR system to get the headset poses that it needs to render the frame. For SteamVr, this is done by the WaitGetPoses call. And then, your app can encode the rendered commands for that frame immediately after getting those inputs. And then, once you've encoded your command buffer, you can commit it to Metal to queue onto the GPU. And then, submit your left and right eye textures to SteamVR.

This'll wake the compositor so it can start encoding its GPU work for the frame. And then, since ordered execution matters, your app will also need to signal the VR compositor when work that is sent to the GPU has been scheduled. So, for a Metal SteamVR app, you simply wait until your command buffer has been scheduled, and then, you can call the SteamVR PostPresentHandoff function.

This'll signal the VR compositor that it can commit its work to the GPU and it'll get queued up in the right order. So, let's see what that would look like in your draw loop. So, at the top of your loop you'd have your WaitGetPoses call, to gather the inputs from the headset. You then, build your command buffer to render your scene and commit it to the GPU. And then, at this point, you'll submit your left and right eye textures to SteamVR. And then, once you've waited for that command buffer to be scheduled, you can call PostPresentHandoff to tell the VR compositor that it's now free to commit work to the GPU, as well.

So, one more note before I move on. It's worth noting that as we extend this diagram to include the next frame, it's WaitGetPoses and not the vertical blank that defines the start of the frame for your app. This is an important thing to keep in mind, and we'll be coming back to this, very shortly.

Let's move on to talk about some best practices. So, the first is to avoid introducing a GPU bubble at the start of your frame, while encoding commands on the CPU. So, helpfully, SteamVR offers a useful mechanism to let you start work for that frame early, by giving your app a two to three millisecond running start.

So, his should look very familiar. By aligning the start of your frame with WaitGetPoses, you'll ensure that you're taking advantage of this optimization and getting that running start. Next, make sure that your app is not building large monolithic command buffers before committing them to the GPU, as this can also introduce GPU bubbles. Instead, you want to split your command buffers where possible and commit them as you go, to maximize GPU utilization in the frame. So, the next optimization that we recommend is to try and coalesce your left and right eye draws, together. The Metal 2 Viewport Array feature provides a great mechanism for you to do this, by letting your app select a per primitive destination viewport in the vertex shader. This can substantially reduce your draw call overhead, as you can now render to both the left and the right eye with a single draw call.

So, let's take a look at an example of how you can adopt Viewport Array for your Metal app using instancing. So, the first thing I want to point out is that you'll need to create a texture that's twice as wide, because you'll now be rendering both the left and right eye scene to the same texture. And then, you simply create your Viewpoint array that defines the bounds for your left and your right eye viewports. And then, you can pass this Viewport array to your render commanding coder using the new setViewports API. And then, at this point, you'll want to make an instance to DrawPrimitives call with an instance count of 2 to issue the draw to your left and right Viewports. We'll be using the instance ID as our eye index in the vertex shader. So, let's take a look at that vertex shader.

So, the first thing I want to point out is that the viewport is selected by the new viewport underscore array underscore index attribute. And then, as I previously noted, we're using the instance underscore id as our eye index.

And we'll be able to use it to access our viewport dependent data like this model view projection matrix in this example. And then, finally, you do want to associate your viewport index with the instance underscore id so that the right viewport is selected when rasterizing your image. And that's how you use the new Viewport Array feature to reduce the draw call cost in your VR apps. The final optimization that I want to talk about, today, is really a general best practice. You want to try and reduce the number of pixels that you're shading every frame. So, due to the nature of the lens warp, about 15% of your rendered scene is not actually visible on the VR headset. This is represented by the blue regions in this image.

Luckily, SteamVR offers a mesh based stencil mask that is specific to the Vive headset that you can use to cull these pixels. It's really easy to use and provides a substantial benefit. That brings us to the end of our brief journey into building a VR app. So, with that background covered, I'd like to now introduce Nat Brown from Valve Software, to come onstage and talk a bit more about SteamVR on macOS. Hi, everybody.

I work on VR at Valve. So, if you don't know Valve, we're a game company. We distribute games and we have a community of gamers that play on Steam. It turns out, games are actually a super-interesting crucible for user interface and human/computer interaction. And at Valve, we do a lot of experiments around games and input. So, years of VR prototypes really didn't click, for us, for making games or any other kind of content.

Until we found this sweet combination of 90 hertz low persistence displays, accurate room scale tracking, with two track controllers. We consider this a magical threshold for VR. I like to think of it as kind of similar to how when you first used a smartphone with low latency accurate touch, it really felt magical. That's what this magical threshold is, for VR. Once room scale VR really clicked for us, we knew that we could build VR games and VR content. We license aspects of our VR technology like base stations, headset lens designs, and so forth, non-exclusively to partners like HTC and LG.

And we have a big program that licenses Lighthouse tracking technology to lots of different partners. Our approach to the software stack you've heard a little bit about, already, is called the SteamVr runtime. SteamVr has an application model, up above, and a hardware and driver model, down below.

Our goal here, is to promote experiments in VR, because we're really in the early days of what VR is and what the content's going to be like. We wanted to make this model so that people could experiment in VR hardware and content and reduce risk. So, you can go out, maybe not you, but some of you can go out and design a new headset or a new tracking system, or new controllers. And then, you can come to an Open VR platform, and you can write a driver that plugs right in.

And you'll have access to all the existing content that's already running. That will give you real world tests that make your hardware better.

Because you and your customers can compare your new idea directly with other hardware that's already out there. So, hardware developers don't have to develop custom content and content developers don't have to bet on which hardware will win. They can just focus on making great content. So, applications link to the Open VR framework. It's a really tiny library, and all it knows how to do is define the runtime that's currently installed. So, it finds this VR client library, that's a shared library, and that either connects to or launches the other runtime processes of SteamVr. The vrmonitor process.

You're going to see a lot of that little window. It's a UI and a setting application. It shows you the state of any attached headsets and controllers, and the tracking sensors. The vrserver is in charge of keeping track of drivers, loading alternate drivers. And it puts poses and other information into shared memory. So, that your application and the rest of the SteamVR runtime have access to it. The vrcompositor, you just heard a little bit about, this process is sort of like the Windows server.

It draws scenes and overlays to the headset. And it corrects images for lens distortion, color. And one of the things that's sort of under the hood, that you may not understand, is it corrects for smearing and ghosting under motion. And it also, fades into a stable tracking area when applications fail to meet frame rate. Because we don't want people to fall over or bump into something. So, the vrcompositor communicates with Metal, like you heard. It presents directly to the headset through the Direct to Display Metal 2 API.

Last, but not least, the mighty vrdashboard. That is a piece of UI that lets you select applications. It lets you control volume, other system settings. We provide a default one that shows you your Steam library, unless you choose applications.

But there's actually an API. So, you can write your own dashboard application, as well. So, Valve and Apple, we started working together more closely, about a year ago. And our port to Metal from OpenGL, it didn't cost us very much. Metal is a really cool API. And it was critical for us to get VR up and performing.

Our biggest request to Apple, a year ago, was for this Direct to Display feature. Because it's critical to ensure that the VR compositor has the fastest time predictable path to the headset display panels. We also, really needed super accurate low variance VBL, vertical blank, events. So, that we could set the cadence of the VR frame presentation timing, and we could predict those poses accurately. Predicting the pose accurately is actually more important than the time between motion happens and when the display happens. If we know when it's going to be, that's actually more important. Finally, we hit some speed bumps around inter-process and inter-thread synchronization.

Once everything else was working really well, Metal was blazing fast, we had really tight VBL, but we still were having some synchronization problems. But Apple helped us find better ways to signal and synchronize with low scheduling variance between all the processes and thread involved. So, my diagram of a VR frame is a little more complicated. Most of you are never going to look under the hood, this far.

But I thought I'd show it to you, anyway. So, the low persistence OLED of the HTC Vibe uses global illumination. All of the pixels of the display flash for a tiny period, all at once. And this is common in VR, because heads move really quickly. And we want to make sure that the image doesn't smear or tear in front of the user.

So, the panel illuminates for about two milliseconds, one frame after it is presented by the GPU, because the panel takes time to charge before it can do that global illumination pulse. So, over here, that's the photons coming out. We're going to follow this red frame through this sequence. So, way out here is the photons coming out. Because of this timing, applications typically pick up a pose, like you heard before, from IVR compositor wakeup poses. So, wakeup poses stalls and returns with a pose for that future firing of photons. So, the rendering is happening here, and you present it there, here in the middle.

But the photons don't go out there. So, we've had to predict a pose about 25 milliseconds out. So, 25 milliseconds is two frames plus the little tiny bit of running start. And you heard how running start's very important, because we want to give you as much of that 11 milliseconds of GPU to get the most excellent pixels up in front of the users, that you can. So, one last thing that's happening, here.

You see this frame actually stretches back all the way to the beginning of the slide. And that's because this application, and your game engine might be doing this under the covers, is kind of more complicated. It has some physics and input event processing, that it needs to do. And that's costly work that's going to take some CPU time, over here. But that code actually needs pose information, also. It needs to know when the buttons were pressed and where the controllers were. Maybe it's interpolating something having to do with motion, or you're blocking something, or you're firing something.

So, this thread actually, it's going to wake up at around the same time, because wakeup poses gives it this important kind of synch point. But it's going to be calling a different API, because it's trying to get a pose much further out. It's looking 36 milliseconds out, and so, it's going to be calling the getDevice to absolute tracking poses. So, I tell you this just so you know, Open VR has some pretty deep APIs for you to tune your applications so that you're predicting where the headset and where the controllers are going to be very accurately, based on where you need them to be, where your code needs them to be. So, the point of wakeup poses is it gives you a predictable point in time at running start, so you always know when those photons are going to come out. So, last but not least, let's talk about what you need to do to get up and running with SteamVr and macOS. So, first of all, it's a tool in Steam, as a developer gets started by installing Steam and registering for a free account.

And if you don't use Steam, you should. Next, install SteamVR itself. SteamVR is under the Library menu in Tools. Search for SteamVR, right click on it, choose the Properties, choose the Beta tab, and opt into the Beta. For now, it's a beta. Then, install it. We'll be keeping SteamVr up to date as we fix any bugs that you find.

Finally, you want to download the Open VR headers and the framework from GitHub. And I've put a link, right up there, for you in the slides.

So, here's the funky part. You need to include that Open VR bootstrapping framework inside of your application. The Open VR framework that you link to, that conveys the version of the interfaces of the runtime you've built and tested against. And that allows us to upgrade the runtime and to version forward, gracefully.

Because we move this forward quite actively. In XCode, instead of just adding the framework to your link phase, go into General Settings and make it an embedded binary. So, it will be installed into the contents frameworks portion of your application bundle.

Finally, we really want your feedback. So, we put some things right into the UI of vrmonitor. There's a pointer to SteamVR's support site and the community hardware discussions.

And you can report a bug, create a system report, and send it to us or probably send it to me. You can reach me at [email protected], but I'd rather you use the tool. And with that, thanks very much. I'm really looking forward to what you guys make with VR. And thanks, to everybody at Apple for making VR shine on macOS. It's been great working with Valve, and I'm still astounded about what we've been able to achieve, over the last year.

Let's move on to talk about the external GPU support that we've added with macOS High Sierra. So, an external GPU is a standalone chassis with a desktop class GPU in it, that you can plug directly to your host system via Thunderbolt. And as noted previously, the primary motivation here, was to enable developers like you to build great VR apps using a broader range of Mac hardware. There's a great workflow story, here, where you can use your MacBook Pro with an external GPU to get the rendering horsepower that you need for VR. But of course, there's also additional performance benefit to be had for other GPU bound cases, like games and pro apps, as well. And as you saw on Monday, we've been partnering with Sonnet and AMD to offer you an external graphics developer kit with an AMD Radeon RX-580 GPU in it.

This kit is optimized for use with all our Thunderbolt 3 capable Macs, and is available for purchase through our developer program, today. Let's dive right into how you identify the external GPU. This device enumeration code should look very familiar to you.

CopyAllDevices will give you all the Metal devices in the system. And then, you can identify the external GPU by simply looking for the removable property in the device. This is very similar to how you previously identified the low power devices on our platforms. Now, let's talk a bit about Thunderbolt bandwidth capabilities. So, Thunderbolt 3 offers twice the theoretical bandwidth of Thunderbolt 2, which is great. But you have to keep in mind that this is still a quarter the bandwidth of the PCI bus available to the internal GPUs in our platforms.

So, this is important. You have a choice, now, between using the internal GPU with a high bandwidth link, or a high performance external GPU with a link at about a quarter the bandwidth. So, you need to treat the link and the GPU as a pair when deciding which GPU you use.

Additionally, users can now attach displays to different GPUs. And in this environment, there's a penalty to render on one GPU and then, display on another, as that date needs to be transferred across the link. So, where your content is displayed clearly is a huge consideration when you decide which GPU you want to use, as well. So, there's additional complexity, here. But fortunately, there's a couple simple things that you can do to make your app a good citizen in a multi-GPU environment.

So, let's start with GPU selection. The best advice that we can give you is to render on the same GPU that's driving the display your app is on. I call this the golden rule of GPU selection. So, let's extend this and build a decision tree.

So, if the content your app is rendering will be presented to a display, you want to select the GPU that's driving that display. This is our golden rule. However, if your app is doing compute or other offline rendering operations, then you need to decide if you prefer to use the low power GPU is it's available. This can be particularly useful on our portables, where selecting this device can have a substantial battery savings. But of course, if you need the GPU horsepower for things like VR, you're going to want to select the external GPU. So, let's get back to our golden rule and find out how you identify the Metal device that's driving a particular display. Well, it turns out that this is really easy to do.

There's an existing core graphics API that will give you this device. You simply have to get the ID for the display that your window is on, by querying the NSScreenNumber.

And then, call CGDirectDisplayCopyCurrentMetal Device to get the Metal device that's driving that display. It's that simple. Now, that we've established that each display can be attached to a different GPU, your app will need to handle GPU migration as your displays are moved. Sorry, as your window is moved across those displays.

So, you can do that by registering for the new, well, it's not new, our existing notification handler called WindowDidChangeScreen. So, let's take a look at what you do with this notification handler. So, you'll want to start by finding the Metal device for the display your app is now on, by calling the core graphics API that we previously discussed. You can early out if it's the same device that you're currently rendering to, since no GPU migration will be required.

And then, you'll want to perform your device migration and switch to using the new device for all your rendering. So, that's how you use a display change notification to handle GPU migration. But what about the case where the external GPU is plugged in, or unplugged from your system?

Well, Metal 2 introduces three new notifications to help you with this case. These are DeviceWasAdded when an external GPU was plugged in, DeviceWasRemoved when it's unplugged, and DeviceRemovalRequested when the OS signals an intent to remove a GPU at some point in the future. So, let's take a look at how you would register for, and then, respond to these notifications.

So, you'll want to use the new CopyAllDevicesWithObserver API that we've introduced with Metal 2. This will let you register a handler for these new device change notifications. In this case, we're simply invoking a function called handleGPUHotPlug. So, let's take a look at it. It's really straightforward. All you have to do is check for and directly respond to each notification.

But I want to point out a couple of things, here. The first is that your app should treat the DeviceRemovalRequested notification as a hint to start migrating off the external GPU. And second, if your app did not receive the DeviceRemovalRequested notification, then it should treat DeviceWasRemoved as an unexpected GPU removal. So, an unexpected GPU removal is when your external GPU is disconnected or powered down without the OS being aware. So, this is the equivalent of somebody reaching into your system and yanking out that GPU.

And since the hardware is no longer there, some Metal API calls will start returning errors. So, you'll want to add defensive code to your app to protect against this. So, it can survive until it receives a migration notification and it can gracefully switch to another GPU in the system. It's also worth pointing out that if you had any transient data on the external GPU's local memory, your app may need to regenerate this, as it's no longer there.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed